TEA RATING : ☕

Continue reading USING WINRAR TO ZIP PDF FILES WITHOUT LOSS OF QUALITYCategory Archives: BLOG

BLOG: UNDERSTANDING THE DIFFERENCE BETWEEN RCCB AND ELCB

Two types of circuit breakers that are frequently used in electrical installations for safety reasons are residual current circuit breakers (RCCB) and earth leakage circuit breakers (ELCB). They both provide protection against electric shock and flames, but they operate differently and have unique characteristics. We will examine the main distinctions between RCCBs and ELCBs in this blog post.

1 Definition

A type of circuit breaker called an RCCB is intended to identify and stop current leakage to earth. It measures the current differential between the live and neutral conductors and trips the circuit when the difference rises above a certain level. Also known as Residual Current Devices, RCCBs (RCDs).

A different kind of circuit breaker called an ELCB, on the other hand, uses the voltage differential between the earthed and neutral wires to detect and stop current leakage to earth. When the voltage between these two wires is compared and reaches a predetermined threshold, the circuit is tripped.

2 Sensitivity

The sensitivity of RCCBs and ELCBs to current leakage is one of their main differences. Compared to ELCBs, RCCBs are more sensitive and can identify current leakage as low as 10 milliamps (mA). They are therefore perfect for usage in settings like homes, hospitals, and other public locations where ensuring human safety is paramount.

In contrast, ELCBs are less sensitive than RCCBs and can only detect current leakage when it is greater than 30 mA. Because of this, they are less appropriate for usage in settings where high sensitivity is required, but they are still helpful for offering minimal protection against electric shock and fires.

3 Installation

The installation requirements for RCCBs and ELCBs are another significant distinction. RCCBs can be put in any region of the electrical installation and are especially helpful in locations with many circuits because they can safeguard each circuit separately.

However, ELCBs are normally put at the distribution board, which is the starting point of the electrical installation. They cannot protect circuits that are upstream of the ELCB, only circuits that are downstream of it.

4 Trip Period

The amount of time it takes for a circuit breaker to trip after a problem is found is referred to as the tripping time. Due to their quicker detection of current leakage than ELCBs, RCCBs often trip more immediately. This implies that they can offer more potent fire and electric shock protection.

ELCBs, on the other hand, have a slower tripping time, therefore they might not be able to offer sufficient protection in circumstances where rapid tripping is necessary.

RCCBs and ELCBs are both crucial safety equipment used to guard against electric shock and fires, to sum up. Even though they share some characteristics, their sensitivity, installation, and tripping times are very different. It’s crucial to select the suitable type of circuit breaker depending on the installation’s unique needs, and to speak with a qualified and licensed electrician for assistance with installation and maintenance.

BLOG: TECHNICAL OVERVIEW OF WATER TREATMENT: PROCESSES AND FORMULAS

Water treatment is a complex process that involves a variety of physical, chemical, and biological processes. In this blog post, we will explore the various methods and technologies used in water treatment, with a focus on the technical details and formulas involved.

Screening

The first step in water treatment is typically the screening process, which involves the removal of large debris and particles from the raw water source. This is typically done using a screen or mesh filter, which prevents large particles from entering the treatment system. The size of the screen or mesh filter is typically measured in terms of mesh size, which refers to the number of openings per inch. For example, a 200 mesh screen has 200 openings per inch.

Coagulation and Flocculation

The next step in water treatment is coagulation and flocculation, which involves adding chemicals to the water to create larger particles, called floc, which can be easily removed from the water. The two primary chemicals used in this process are aluminum sulfate (Al2(SO4)3) and ferric chloride (FeCl3).

The effectiveness of coagulation and flocculation can be quantified using the jar test, which involves mixing a small sample of water with various doses of coagulant and observing the resulting floc formation. The optimal dosage of coagulant can then be determined based on the best floc formation.

Sedimentation

After coagulation and flocculation, the water is sent through a sedimentation tank, where the floc settles to the bottom of the tank and is removed. The rate of sedimentation can be calculated using Stokes’ Law, which states that the rate of settling of a particle in a fluid is proportional to the particle’s radius, density, and the difference in density between the particle and the fluid. The formula for Stokes’ Law is:

$latex V = \frac{2}{9}\frac{(d_p – d_f)gr^2}{u} $

where V is the settling velocity, dp is the density of the particle, df is the density of the fluid, g is the acceleration due to gravity, r is the radius of the particle, and u is the viscosity of the fluid.

Filtration

After sedimentation, the water is sent through a series of filters to remove remaining impurities. The two primary types of filters used in water treatment are rapid sand filters and granular activated carbon (GAC) filters.

Rapid sand filters are typically composed of multiple layers of sand and gravel, with the largest particles at the bottom and the smallest particles at the top. As water passes through the filter, impurities are trapped in the sand and gravel layers. The effectiveness of a sand filter can be measured using the head loss method, which involves measuring the pressure drop across the filter as water flows through it.

GAC filters are composed of activated carbon particles, which have a large surface area and can adsorb a variety of organic and inorganic compounds from the water. The effectiveness of a GAC filter can be measured using the breakthrough curve method, which involves monitoring the concentration of a target compound in the filtered water over time.

Disinfection

After filtration, the water is disinfected to kill any remaining bacteria and viruses. The most common disinfectant used in water treatment is chlorine, which is added to the water in precise amounts to ensure the water is safe to drink. Chlorine works by reacting with the organic matter in the water and producing hypochlorous acid, which is a strong oxidizing agent that can kill bacteria and viruses.

The amount of chlorine needed to disinfect the water depends on the level of organic matter present in the water. The formula used to calculate the amount of chlorine needed is:

$latex C_t = \frac{V_s(C_i – C_f)}{V_wQ} $

where Ct is the target chlorine concentration, Vs is the volume of the water being treated, Ci is the initial chlorine concentration, Cf is the desired chlorine concentration, Vw is the volume of the water in the treatment tank, and Q is the flow rate of the water.

Once the chlorine has been added to the water, it is typically held in a contact tank for a period of time to ensure that all of the bacteria and viruses are killed. The contact time required varies depending on the level of organic matter in the water, but is typically around 30 minutes.

pH Adjustment

In addition to disinfection, the pH of the water may also need to be adjusted to ensure that it is safe for consumption. The optimal pH for drinking water is typically between 6.5 and 8.5. If the pH is too low or too high, it can cause corrosion of the pipes and other infrastructure, as well as impact the taste of the water.

The pH of the water can be adjusted using various chemicals, including sodium carbonate (Na2CO3) and sodium hydroxide (NaOH). The amount of chemical needed to adjust the pH depends on the initial pH of the water and the desired pH.

Conclusion

Water treatment is a critical process that ensures the safety and quality of our drinking water. The various steps involved in water treatment, including screening, coagulation and flocculation, sedimentation, filtration, disinfection, and pH adjustment, require a combination of physical, chemical, and biological processes. Understanding the technical details and formulas involved in these processes is crucial to developing effective water treatment systems that meet the needs of communities around the world.

BLOG: THE ESSENTIAL GUIDE TO ROPES IN ENGINEERING: TYPES, USES AND MANUFACTURING

Ropes are essential tools that have been used for centuries, and they are still widely used in engineering today. They are made up of fibers or wires that are twisted together to form a strong, flexible, and durable material that can be used for a wide range of applications. In this blog post, we will explore the different types of ropes, their uses in engineering, and how they are made.

Types of Ropes

There are several types of ropes, each with its unique characteristics and uses. Some of the most common types of ropes used in engineering include:

- Synthetic Ropes: These ropes are made from synthetic materials such as polyester, nylon, and polypropylene. They are lightweight, strong, and have excellent resistance to UV radiation and chemicals.

- Wire Ropes: Wire ropes are made from strands of steel wires twisted together. They are incredibly strong, durable, and have excellent resistance to abrasion and corrosion.

- Natural Fiber Ropes: Natural fiber ropes are made from materials such as hemp, sisal, and cotton. They are biodegradable, cost-effective, and have excellent grip characteristics.

Uses of Ropes in Engineering

Ropes have a wide range of uses in engineering, including:

- Lifting and Rigging: Ropes are commonly used in lifting and rigging applications, such as crane operations, hoists, and pulleys.

- Transportation: Ropes are used in transportation applications, such as mooring ships, towing vehicles, and securing cargo.

- Construction: Ropes are used in construction applications, such as scaffolding, safety lines, and bridge construction.

- Rescue Operations: Ropes are commonly used in rescue operations, such as in mountain climbing, search and rescue, and firefighting.

How Ropes are Made

The process of making ropes involves twisting fibers or wires together to create a strong, durable material. The specific method used to create ropes depends on the type of rope being made. For example, synthetic ropes are typically made by extruding synthetic fibers through a die to create a continuous strand that is then twisted together to form the final rope.

Conclusion

Ropes are an essential tool in engineering and have been used for centuries. They are made from a variety of materials, including synthetic fibers, steel wires, and natural fibers, and have a wide range of applications. Understanding the different types of ropes, their uses in engineering, and how they are made is essential for anyone working in the field of engineering.

BLOG: THE BASICS OF TRANSISTORS: STRUCTURE, OPERATION AND APPLICATIONS

Transistors are an essential component of modern electronics. They are used in a variety of applications, including amplifiers, switches, and digital circuits. In this blog post, we will explore the basics of transistors, including their structure, operation, and applications.

Structure of Transistors

A transistor is a three-terminal device made up of three layers of semiconductors, either p-type or n-type. The three terminals are called the emitter, base, and collector. There are two main types of transistors: bipolar junction transistors (BJTs) and field-effect transistors (FETs).

BJTs have two junctions between the p-type and n-type materials, while FETs have one. BJTs can be either npn or pnp, while FETs can be either n-channel or p-channel.

Operation of Transistors

The operation of a transistor is based on the control of the flow of current between the collector and emitter terminals by the voltage applied to the base terminal. When a voltage is applied to the base terminal, it creates a current flow through the base-emitter junction, which allows current to flow from the collector to the emitter. The amount of current flow through the collector-emitter junction is determined by the current flow through the base-emitter junction, which is controlled by the voltage applied to the base terminal.

Applications of Transistors

Transistors have a wide range of applications in electronic devices. They are commonly used in amplifiers to increase the strength of a signal. For example, a small electrical signal from a microphone can be amplified using a transistor to drive a speaker.

Transistors are also used in switching applications. When used as a switch, a transistor can turn a circuit on or off, depending on the voltage applied to the base terminal. This makes them ideal for use in digital circuits, where they can be used to create logic gates, flip-flops, and other digital components.

Conclusion

Transistors are an essential component of modern electronics. They are used in a variety of applications, including amplifiers, switches, and digital circuits. Understanding the basics of transistors, including their structure and operation, is important for anyone interested in electronics. Whether you are a hobbyist or a professional, transistors are an essential component of any electronic project.

BLOG: 10 Fascinating Facts Every Mechanical Engineer Should Know

Mechanical engineering is a fascinating field that involves the design, analysis, and production of machines, structures, and systems. If you’re a mechanical engineer or someone interested in the field, there are many interesting facts that you should know. Here are ten of them:

- The first mechanical calculator, called the Pascaline, was invented by Blaise Pascal in 1642. It could perform addition and subtraction.

- The steam engine, a crucial invention in the Industrial Revolution, was developed by James Watt in the 18th century. It transformed the way energy was produced and used, and paved the way for modern machinery.

- The first automobile was invented by Karl Benz in 1885. It was a three-wheeled vehicle powered by a gasoline engine.

- The Wright Brothers’ first flight in 1903 was made possible by their invention of the three-axis control system, which allowed them to steer their aircraft in flight.

- The Eiffel Tower, one of the world’s most famous structures, was designed by Gustave Eiffel for the 1889 World’s Fair in Paris. It was the tallest man-made structure in the world at the time.

- The first modern submarine, the USS Holland, was designed by John Holland in 1900. It used gasoline engines on the surface and electric motors underwater.

- The first jet engine was developed by Frank Whittle in 1937. It revolutionized aviation and paved the way for supersonic flight.

- The first successful heart transplant was performed by Dr. Christiaan Barnard in 1967. The artificial heart, a crucial component of the surgery, was developed by Robert Jarvik in 1982.

- The Mars Rover, which has explored the surface of Mars since 1996, was designed and built by a team of mechanical engineers at NASA’s Jet Propulsion Laboratory.

- The tallest building in the world, the Burj Khalifa in Dubai, was designed by Adrian Smith, a mechanical engineer. It stands at over 828 meters (2,716 feet) tall and has over 160 floors.

Mechanical engineering is a field that has transformed the world we live in, and these ten facts only scratch the surface of the many incredible achievements that mechanical engineers have made throughout history. Whether you’re a mechanical engineer or just interested in the field, there’s no shortage of fascinating stories to discover.

BLOG: 10 Essential Facts Every Electrical Engineer Needs to Know

As an electrical engineer, you have a unique set of knowledge and skills that enable you to design, develop, and maintain electrical systems. Here are 10 interesting facts that every electrical engineer should know:

- Electricity travels at the speed of light – about 186,000 miles per second – making it one of the fastest things in the universe.

- The first electrical generator was invented by Michael Faraday in 1831, which paved the way for the widespread use of electricity.

- The first commercial electric power station was built by Thomas Edison in 1882, which provided electricity to the people of New York City.

- The most common unit of measurement for electrical power is the watt, named after Scottish engineer James Watt.

- The International System of Units (SI) unit for electric current is the ampere, named after French physicist André-Marie Ampère.

- Electrical engineers are responsible for designing and maintaining power grids, which distribute electricity to homes, businesses, and industries.

- Electrical engineers must take into account the properties of materials when designing electrical systems, including conductivity, resistance, and dielectric strength.

- Electrical engineers must also consider the effects of electromagnetic interference (EMI) and electromagnetic compatibility (EMC) when designing systems that operate in close proximity to other electrical devices.

- Electrical engineers must stay up-to-date with the latest developments in renewable energy technologies, including solar, wind, and hydroelectric power.

- Electrical engineers play a critical role in ensuring the safety and reliability of electrical systems, from household appliances to large-scale power grids.

In conclusion, these are just a few of the many interesting facts that every electrical engineer should know. By staying informed, developing their skills, and applying their knowledge to real-world problems, electrical engineers can make a significant impact in their field and help create a more sustainable and interconnected world.

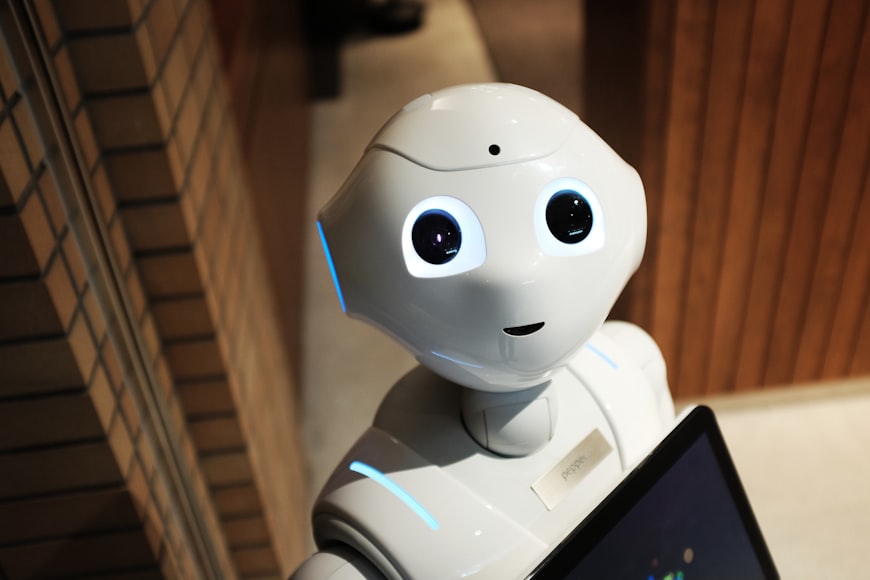

BLOG: Revolutionizing Engineering: The Power of Artificial Intelligence

Artificial Intelligence (AI) is rapidly transforming the field of engineering, and its potential applications are virtually limitless. From automating tedious tasks to optimizing complex systems, AI is revolutionizing the way we approach engineering problems.

At its core, AI is all about developing algorithms that can learn from data and make predictions or decisions based on that data. This can be incredibly powerful in engineering, where we often deal with large amounts of data and complex systems that are difficult to optimize manually.

One of the most promising applications of AI in engineering is in the realm of predictive maintenance. By analyzing sensor data from machines and equipment, AI algorithms can identify patterns that indicate when maintenance is needed, allowing engineers to address potential issues before they become major problems. This can help to reduce downtime, improve reliability, and ultimately save money.

Another area where AI is making a big impact is in the design process. By using machine learning algorithms to analyze large datasets of design information, engineers can identify patterns and optimize designs more quickly and accurately than ever before. This can help to speed up the design process and improve the quality of the final product.

AI is also being used to optimize manufacturing processes, by identifying the most efficient ways to produce products and reducing waste. This can help to improve productivity and reduce costs, while also minimizing the environmental impact of manufacturing.

Of course, like any new technology, AI also presents some challenges and risks. One of the biggest concerns is the potential for bias in AI algorithms, which can lead to unintended consequences and unfair outcomes. As engineers, it’s important to be aware of these risks and work to mitigate them through careful design and testing.

Overall, the potential benefits of AI in engineering are enormous, and we’re only just scratching the surface of what’s possible. As the technology continues to evolve and improve, we can expect to see even more exciting developments in the years ahead.

BLOG: HUSTLING VS SLOW BUSINESS

1 Is there any scientific article on hustling vs. slow business

Yes, there are scientific articles on the topic of hustling versus slow business. Here are a few examples:

- “The Slow Business Revolution: Challenges and Opportunities in the Digital Age” by Nicolai Foss and Soren Jensen. This article argues that in today’s fast-paced business environment, there is a growing trend towards “slow business” – businesses that prioritize quality over speed and take a more thoughtful approach to decision-making. The authors discuss the benefits and challenges of this approach, and suggest ways that businesses can successfully navigate the shift towards slower, more deliberate practices.

- “The Hustle Economy: Transforming Your Creativity into a Career” by Jason W. Womack. This book explores the concept of “hustling” – the idea that success in today’s economy requires a relentless focus on self-promotion, networking, and rapid-fire decision-making. The author provides tips and strategies for individuals looking to succeed in this environment, including ways to build a personal brand and cultivate a strong work ethic.

- “The Slow Movement and Sustainable Entrepreneurship” by Erika L. Douglas and Mark A. Bonn. This article examines the relationship between the “slow movement” – a cultural trend that emphasizes a slower, more deliberate pace of life – and sustainable entrepreneurship. The authors argue that slow business practices can be an effective way to build sustainable, socially responsible businesses that prioritize long-term growth and impact over short-term profits.

Overall, there is growing interest in the topic of hustling vs. slow business, and many researchers and business leaders are exploring the pros and cons of each approach.

2 Which approach is better for business? Slow business or hustling?

There is no one-size-fits-all answer to this question, as the best approach for a given business will depend on a range of factors, including its industry, size, goals, and values. However, it is worth considering some of the potential advantages and disadvantages of each approach:

Advantages of slow business:

- Allows for more thoughtful decision-making and planning

- Can result in higher-quality products or services

- May lead to more sustainable, long-term growth

- May be more aligned with certain values, such as environmental sustainability or social responsibility

Disadvantages of slow business:

- May be perceived as lacking urgency or competitiveness

- May struggle to keep up with rapidly changing market conditions or customer demands

- May require a greater upfront investment of time, money, or resources

- May be less appealing to investors or other stakeholders who prioritize rapid growth or profitability

Advantages of hustling:

- Can lead to rapid growth and market success

- May attract more attention from investors or other stakeholders

- Allows for quick adaptation to changing market conditions or customer demands

- Can help build a strong personal brand or network

Disadvantages of hustling:

- Can lead to burnout or a focus on short-term gains over long-term sustainability

- May result in lower-quality products or services due to rushed decision-making or processes

- May be more stressful or less fulfilling for business owners or employees

- May be perceived as opportunistic or lacking authenticity

In summary, both slow business and hustling have their advantages and disadvantages, and the best approach for a given business will depend on a range of factors. It is important for business owners to carefully consider their goals, values, and resources before choosing an approach.

3 Which approach is better for passive income? Hustling or slow business?

When it comes to generating passive income, a slow business approach is generally considered to be more effective than hustling. This is because passive income typically requires a more long-term, sustainable approach that allows for steady growth over time. Here are some reasons why slow business may be better suited for generating passive income:

4 In the slow business logic do I need to do everything slowly?

No, in the slow business approach, it’s not necessary to do everything slowly. Rather, slow business is about prioritizing quality, sustainability, and ethical practices over rapid growth and short-term profits. This approach may involve taking the time to develop meaningful relationships with customers, suppliers, and other stakeholders, ensuring that your products and services are of the highest quality, and considering the environmental and social impact of your business practices.

While slow business does emphasize a more thoughtful and deliberate approach to entrepreneurship, it doesn’t necessarily mean that everything needs to be done slowly. In fact, there may be times when moving quickly is necessary, such as when responding to customer needs or adapting to changes in the marketplace.

Ultimately, slow business is about finding the right balance between speed and quality, and making decisions that prioritize the long-term success of your business over short-term gains. By adopting this approach, you can build a sustainable, ethical business that prioritizes the needs of your stakeholders and contributes to a more just and equitable society.

5 Hustling vs slow business as an engineer?

As an engineer, the choice between hustling and slow business will depend on a number of factors, including your individual goals, circumstances, and values.

Hustling as an engineer may involve taking on multiple projects simultaneously, working long hours, and pushing yourself to meet tight deadlines. This approach may be necessary if you have a lot of work to do in a short amount of time, or if you’re working on a project with a tight deadline. However, it can also carry risks, such as burnout and decreased quality.

On the other hand, a slow business approach as an engineer may prioritize quality and consistency over speed. This approach may involve taking on fewer projects at a time, but dedicating more time and attention to each project. This can result in higher-quality work and more satisfied clients, but may also mean slower progress towards your goals.

Ultimately, the best approach will depend on your individual circumstances and goals as an engineer. It’s important to consider factors such as project timelines, available resources, and your personal values when making decisions about your approach to your work.

BLOG: How Engineers are Using Augmented Reality to Transform the Construction Industry

As the construction industry continues to evolve, engineers are constantly exploring new technologies to help them design, plan, and execute projects more efficiently. One of the most exciting developments in recent years has been the emergence of augmented reality (AR) as a powerful tool for engineers in the construction industry.

AR technology allows engineers to overlay digital images and information on top of the real-world environment, providing them with a highly detailed, 3D view of a construction site or building. This technology has the potential to transform the way engineers work, improving accuracy, efficiency, and safety on construction sites.

Here are just a few of the ways engineers are using AR technology to transform the construction industry:

- Improved Design Visualization: With AR technology, engineers can create highly realistic 3D models of buildings and construction sites. This allows them to get a better sense of how a project will look in the real world, and make adjustments before construction even begins. By visualizing a project in detail before construction, engineers can identify and address potential issues early on, saving time and money in the long run.

- Enhanced Collaboration: AR technology also makes it easier for engineers to collaborate with other professionals involved in a project, such as architects, contractors, and project managers. By sharing a common digital platform, everyone can see the same 3D models and annotations, making it easier to communicate ideas and solve problems together.

- Safer Work Environments: One of the most important benefits of AR technology is that it can improve safety on construction sites. By providing engineers with a 3D model of a construction site, they can identify potential hazards and design solutions to minimize risk. AR technology can also be used to provide training simulations for workers, helping them to learn the necessary skills and safety protocols in a safe, virtual environment.

- More Efficient Project Management: AR technology can also improve the efficiency of project management. By providing a detailed, 3D model of a construction site, engineers can create accurate schedules and cost estimates. They can also use the technology to monitor progress and identify potential delays or bottlenecks, allowing them to adjust the project plan as needed to keep everything on track.

In conclusion, augmented reality technology is quickly becoming a game-changer for engineers in the construction industry. By providing a highly detailed, 3D view of construction sites and buildings, AR technology can improve accuracy, efficiency, and safety, while also making it easier for engineers to collaborate and manage projects. As this technology continues to evolve, we can expect to see even more innovative uses in the construction industry in the years to come.